Instagram's 'Seen' Is a Lie — And They're About to Charge You for the Proof

Table of Contents

TL;DR

Every privacy signal on Instagram - story seen, DM read receipts, typing indicators, online presence - is a client-side call with zero server-side enforcement. Block it and you’re invisible. One line of code. This has been possible since at least 2019. The “24-hour” stories persist on Instagram’s CDN for 2 to 5 days via unauthenticated bearer token URLs. Close friends stories use the same CDN with no additional access control. DM read receipts fire through two redundant mutations - one via HTTP, one via a SharedWorker - and Instagram’s mobile “disable read receipts” toggle only blocks one of them, meaning users who disabled read receipts are still visible as “read” on the web client. Meta is now testing a $2/month subscription to sell two of these capabilities as premium features. Their own Terms of Service state the platform is provided “as is” with no guarantee that any feature works - they never promised the “seen” was accurate. I built a browser extension that does everything their subscription does and more, for free. I also designed a fix using standard encryption that would solve the problem without affecting performance. Meta has the engineering talent to build it. They chose not to.

Source code: instagram-story-research

How it started

I was scrolling Instagram on my phone and noticed something odd: someone had deleted their story, but I could still see it in the app. The cache hadn’t cleared yet. Pure curiosity kicked in. As a security researcher, when something behaves unexpectedly, I dig. I wanted to understand how story delivery works under the hood.

I opened DevTools on the web version, clicked on a story, and looked at the network tab. I expected something complicated. What I found was embarrassing.

Two calls

When you view an Instagram story, the browser makes two separate GraphQL calls:

1. PolarisStoriesV3ReelPageGalleryQuery → downloads the story

2. PolarisStoriesV3SeenMutation → tells Instagram you viewed itThe content is already in your browser before Instagram knows you saw it. The “seen” indicator - the one that shows your name in the viewer list, the one that 2 billion people trust - is a separate HTTP request that your browser sends AFTER you’ve already seen the content.

Normal flow:

sequenceDiagram

participant Browser

participant Instagram

Browser->>Instagram: GalleryQuery (fetch story)

Instagram-->>Browser: Story content

Note over Browser: Content rendered

Browser->>Instagram: SeenMutation

Note over Instagram: You appear in the viewer listWith the extension:

sequenceDiagram

participant Browser

participant Extension

participant Instagram

Note over Extension: Extracts doc_ids from JS bundles

Extension->>Instagram: Auto GalleryQuery (no click needed)

Instagram-->>Extension: Story content (intercepted via StreamFilter)

Extension-->>Browser: Content passed through

Note over Extension: Saved to disk + metadata cached + CSV exported

Browser-xInstagram: SeenMutation blocked (cancel: true)

Note over Instagram: You were never hereBlock it and you’re invisible:

if (body.includes("PolarisStoriesV3SeenMutation")) {

return { cancel: true };

}For non-developers: when you use Instagram, your browser (the “client”) and Instagram’s servers communicate using HTTP. As MDN puts it: “When you click a link on a web page, submit a form, or run a search, the browser sends an HTTP Request to the server.” The server processes it and sends back a response. That’s all the web is: requests and responses between your browser and a server.

When you view a story, your browser sends a request to fetch the content (the server responds with the story), and then sends a second request to tell Instagram you saw it. This code checks if your browser is about to send that second “I saw this story” request. If it is, it tells your browser to not send it. That’s it. And to be clear: the cancel: true is an instruction to your own browser, the client. It’s not a message to Instagram’s servers. Nothing is sent. No packet leaves your machine. From Instagram’s perspective, the request never happened. There’s nothing to detect, nothing to log, nothing to block. The request simply doesn’t exist.

A standard browser API that Firefox and Chrome have supported for over a decade. No hack, no exploit, no reverse engineering. Just… not making a call.

ELI5: When you look at someone’s story, Instagram does two things: (1) it shows you the story, then (2) it tells the other person you saw it. These are two separate steps. My extension lets step 1 happen but blocks step 2. You see the story, they never know. It’s like reading someone’s letter and putting it back in the envelope before they check.

In security terminology, this is CWE-602: Client-Side Enforcement of Server-Side Security. MITRE’s description reads: “The product is composed of a server that relies on the client to implement a mechanism that is intended to protect the server. When the server relies on protection mechanisms placed on the client side, an attacker can modify the client-side behavior to bypass the protection mechanisms.” The OWASP ASVS explicitly requires server-side enforcement of all security controls. Instagram’s “seen” indicator is a textbook case of what both standards warn against: a server that trusts the client to report its own activity.

But now Meta wants to charge for it

Instagram is testing Instagram Plus, a paid subscription currently in Mexico, Japan, and Philippines. The headline feature? Anonymous story viewing. Pay $1-2/month and your name won’t appear in the viewer list.

Meta projects $2.4 billion in annual revenue if 1% of users subscribe.

The full Instagram Plus package includes anonymous viewing, searchable viewer lists, extended story duration, animated Superlikes, and engagement metrics. But nobody pays $2/month for animated Superlikes. They pay to view without being seen and to know who’s viewing them.

Both of those features have always been free in the architecture.

The product is a cancel: true on a GraphQL call. That’s the $2.4 billion business.

ELI5: Instagram is testing a $2/month subscription to let you view stories anonymously. But viewing stories anonymously was always possible for free - your browser just had to stop telling Instagram you were there. Meta is charging you to flip a switch that was always in your hands. They expect to make $2.4 billion a year from this.

This isn’t new

I thought I’d discovered something. I hadn’t. Browser extensions doing this have existed since 2019. Seven years ago. Zero stars, zero attention, zero media coverage. The capability has been public for seven years and nobody outside of a handful of developers noticed.

And it’s been doable for free this entire time. Now Meta packages it as innovation and the tech press covers it like it’s new.

ELI5: This trick isn’t new. Someone published the exact same bypass in 2019. It sat on GitHub for 7 years with zero stars and zero attention. The capability was always public. Nobody cared until Meta decided to sell it.

So I built the full thing

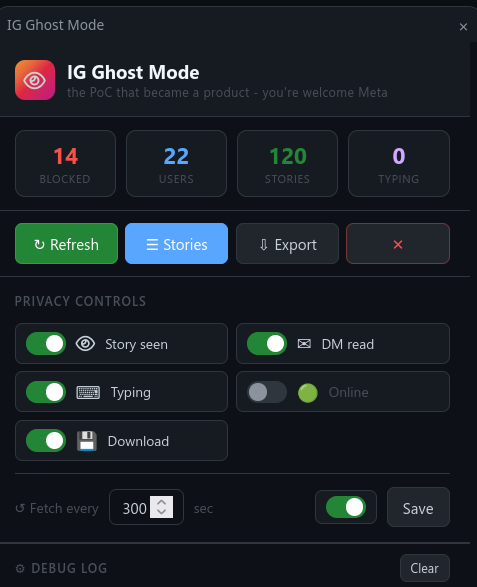

What started as a proof of concept to block one GraphQL call turned into something else. I kept pulling threads, and every thread led to the same conclusion: nothing on Instagram is enforced server-side. So I built an extension that demonstrates all of it.

It does more than Instagram Plus. For $0. And it does things Instagram Plus doesn’t even offer.

Five privacy signals blocked. Story seen receipts (PolarisStoriesV3SeenMutation), DM read receipts (useIGDMarkThreadAsReadValidationMutation), typing indicators (MQTT typing_activity), and online presence (MQTT co_presence heartbeat). Each one toggleable independently. All of them client-side trust with zero server-side enforcement. The DM read blocking is surgical: it blocks the validation mutation that tells the sender you read their message, but lets through the local read mutation so your own inbox marks the conversation as read. You see it as read, they don’t.

Full tray fetch. The extension queries Instagram’s /api/v1/feed/reels_tray/ endpoint to discover every user with active stories, not just the ones visible in the HTML. Then it fires paginated GalleryQuery requests until every story is cached. No manual clicking.

Local media archive. Every story is saved to ig_stories/<username>/<date>_<id>.jpg|mp4. Your browser already downloads this content to display it. The extension just saves it to a named file instead of letting it disappear from the temp cache. If someone deletes a story 5 minutes later, the file is already on your disk.

Deletion vs expiry. The extension distinguishes between stories that expired naturally after 24 hours and stories that were manually deleted by the poster before expiry. A deleted story is flagged as PURGED with a timestamp. The file persists. You know what was removed and when.

Seen tracking. Using the tray API’s seen timestamps, the extension knows which stories you viewed before the extension was active (SEEN - receipt was sent) and which you viewed invisibly (GHOST - receipt was blocked). This status is stored permanently and survives cache clears.

Close friends detection. Stories posted to the poster’s close friends list are identified and badged.

Asymmetric visibility. This is the worst part. The extension blocks your seen receipts to others, but your own story metadata includes the full viewer list with user IDs. You see who views your stories while being invisible when viewing theirs. Instagram Plus sells anonymous viewing. This extension gives you anonymous viewing AND full viewer analytics. For free.

Story browser. A dedicated full-tab interface with a grid layout, search by username, sort by date, expandable metadata panels per story (media ID, timestamps, dimensions, music, caption, audience, viewer count, local file path), an image/video lightbox, and direct links to open local files. It scans your downloads folder to show stories that exist on disk even after the cache is cleared.

CSV export. Every cache update exports a CSV with 18 columns including seen status, deletion status, and local file paths. A complete forensic log of every story the extension has ever captured.

WebSocket interception. Typing indicators and presence don’t use HTTP. They run over Instagram’s MQTT WebSocket connection. The extension injects a script into the page context that monkey-patches WebSocket.prototype.send and filters outgoing frames on both known gateways (gateway.instagram.com and edge-chat.instagram.com). Binary MQTT frames are decoded and matched against known markers. The frame is dropped before it leaves your browser. The recipient sees nothing.

System theme. Follows your OS dark/light preference automatically.

Persistent state. Story cache, reel IDs, and seen history survive extension reloads via storage.local.

ELI5: I built a free tool that blocks every privacy signal Instagram has: story views, DM read receipts, typing indicators, online status. It also saves every story to your computer, detects deletions, and exports a full log. Think of it as a dashboard that shows you everything while making you invisible to everyone else.

”Ephemeral” content isn’t ephemeral

Instagram stories are supposed to disappear after 24 hours. They don’t.

When a story expires in the Instagram UI, the CDN URL that served the media remains accessible. I tested this: a story that expired over 2 hours ago was still fully viewable via its CDN link. No authentication required. The signed URL embedded in the original GraphQL response continues to work long after the story “disappears” from the app.

My initial assumption was that this exists because of Instagram’s Story Archive feature, which lets users save their own stories after expiry. But that doesn’t hold up: the archive persists for years, which means it almost certainly uses a different storage and access system (likely re-generated signed URLs or direct authenticated storage) rather than the same short-lived CDN tokens. The CDN token lifetime of ~5 days for a 24-hour story is not explained by the archive. It’s just a token that’s configured far longer than it needs to be for ephemeral content. The oe expiry mechanism already exists in the URL - setting it to 25 hours instead of ~120 hours would be a configuration change, not an engineering effort. The CDN URL has no per-user access control. It’s a signed URL with a bearer token, and that token’s lifetime extends well past the story’s 24-hour window. Anyone who captured the URL - via the extension’s CSV, via a network log, via a shared link - can access the media after it “expired.”

This means you can send the CDN link to someone who doesn’t even have an Instagram account, and they can view the “expired” content in a regular browser. No login, no app, no authentication.

Close friends stories are not protected either. I tested this on my own close friends stories: opening the CDN URL in a private browsing window with no Instagram session loaded the content without any issue. No cookie, no login, no account. But beyond my own test, this isn’t even a finding that requires testing - it follows directly from how CDNs work. A CDN serves static files. It validates the URL signature (oh) and checks the expiry token (oe). It does not process cookies, it does not query a user database, and it does not know what a “close friends list” is. The audience restriction happens upstream: Instagram’s GraphQL API checks whether you’re in the poster’s close friends list and only includes the CDN URL in the response if you are. But once that URL is in the API response, it’s a bearer token. The CDN serves the file to anyone who has it. A close friend who copies the URL and shares it in a group chat just made the poster’s “private” story accessible to everyone in that group, in full resolution, without an Instagram account, for up to 5 days. The CDN doesn’t know and doesn’t care.

Instagram’s “ephemeral” content model is a UI abstraction. The content isn’t deleted when it expires. It isn’t access-controlled at the CDN level. The “24 hours” is a lie told by the interface while the files persist on servers that anyone can reach with the right URL.

I decoded the CDN URL parameters on one of the expired stories in my cache. Here’s what the URL contains:

https://scontent-<edge>.cdninstagram.com/v/t51.82787-15/<filename>.heic?

stp=dst-jpg_e35_tt6 # Transcode pipeline: HEIC -> JPG, quality level

ig_cache_key=<base64> # Base64-encoded media ID (matches GraphQL response)

efg=<base64> # Encoding metadata (JSON): resolution, codec, dynamic range

_nc_ohc=<hash> # Content integrity hash (short)

_nc_oc=<hash> # Content integrity hash (long)

_nc_ht=scontent-<edge>... # Hostname lock (prevents CDN redirect abuse)

oh=00_<signature> # URL HMAC signature - NOT per-user, NOT per-session

oe=<hex_timestamp> # URL expiry: hex-encoded Unix timestampThe hostname identifies the nearest CDN edge node (named after airport codes). The t51.82787-15 path segment identifies the content pipeline (image story). The original file in this case was Apple HEIC format, transcoded to JPG on-the-fly by the CDN via the stp parameter. The efg parameter is a base64-encoded JSON object containing encoding metadata (1080x1920 resolution, standard dynamic range, codec identifier).

The two parameters that matter for security are oh and oe. oh is the HMAC signature that authenticates the URL. It’s bound to the content path and parameters, not to any viewer identity. There’s no user ID, no session token, no cookie reference in the signature. It’s a bearer token: possession of the URL equals access to the content. oe is the URL expiry, a hex-encoded Unix timestamp.

I parsed the oe token on all stories in my local cache. The token lifetime varies by media type:

| Media type | CDN token lifetime | Range |

|---|---|---|

| Images | ~5 days average | 5.0 - 5.3 days |

| Videos | ~2 days average | 1.4 - 5.1 days |

Images consistently get a CDN token valid for over 5 days. Videos average around 2 days, likely because they’re heavier and Instagram wants to free CDN storage sooner. Some video outliers reach 5 days as well. I also observed that close friends stories follow the exact same token lifetime as public stories - no difference whatsoever. The “24-hour story” lives on the CDN for 2 to 5 days depending on media type.

Here’s the irony: the CDN already uses cryptography. The oh parameter is an HMAC signature that authenticates the URL. The oe parameter is a signed expiry timestamp. Instagram’s CDN infrastructure already implements signing, integrity checks, and token expiration. The crypto that protects Meta is already there - nobody can forge a CDN URL without the signing key. But the crypto that would protect users - encrypting the content so the seen receipt is enforced - is absent. They implemented the cryptography that prevents unauthorized URL generation (protecting their infrastructure) and skipped the cryptography that would prevent unauthorized content access (protecting your privacy). They built the lock that protects them. They chose not to build the one that protects you.

I can’t determine from the outside whether this token lifetime is consistent across all stories or varies by region, account type, or archive settings. But the fact that a single observed story had a CDN URL valid for nearly 5 days after a 24-hour “expiry” suggests that the gap between UI expiry and actual content availability is not a few minutes of cache lag. It’s days. By design.

ELI5: Instagram says your story disappears after 24 hours. It doesn’t. The photo or video stays on their servers for 2 to 5 days after it “expires.” Anyone who saved the link can still see it, no Instagram account needed. It’s like throwing a letter in the trash but the trash doesn’t get emptied for a week, and anyone can look through it. Even “close friends only” stories work the same way - the link doesn’t check who you are.

Here’s the punchline: one of Instagram Plus’s paid features is extended story duration beyond 24 hours. The content already persists beyond 24 hours on the CDN. The “24-hour limit” is a client-side UI check, not a server-side deletion. Meta is charging users to change a display timer on content that was never actually deleted. Two of Instagram Plus’s headline features - anonymous viewing and extended stories - are UI toggles on capabilities that already exist in the infrastructure. One is a cancel: true on a GraphQL call. The other is a number change in a visibility check. Neither requires any engineering work. Both are sold as premium features.

The real question

The SeenMutation has been a separate call since stories launched. Meta’s engineering team designed it this way. There are two explanations:

It’s an oversight. Meta never noticed that the seen indicator has zero server-side enforcement. For years. On a platform with 2 billion users. With thousands of engineers. Unlikely.

It’s intentional. They always planned to monetize the toggle. The separation between fetch and seen was a business decision. The “seen” was never a privacy guarantee. It was a future revenue stream parked in the architecture, waiting for the right moment.

To be fair: I can’t prove intent. What I can prove is capability. Instagram Plus also includes a rewatch count, showing how many times someone re-viewed your story. That feature is server-side. It has to be, the client can’t track other people’s repeat views. So Meta knows how to do server-side enforcement. The rewatch count proves it. They just chose not to do it for the seen. Whether that choice was made in 2016 with monetization in mind or in 2026 when someone realized they could sell it doesn’t change the outcome. The capability existed. The fix was never shipped. The subscription was.

ELI5: Meta already has server-side features that actually work (like the rewatch count). They know how to enforce things properly. They just chose not to do it for the “seen.” The question is whether that was an accident or a business decision. The rewatch count proves they have the skills. The subscription proves they have the motive.

The fix Meta won’t ship

They could fix it. And they wouldn’t lose the instant loading.

To understand how absurd the current system is, imagine a building with no security cameras. Instead, every visitor is given a camera at the door and asked to please film themselves as they walk through. The footage is sent to a security desk that logs who entered. If a visitor just turns off their camera, they walk through invisible. The building has no camera of its own. It has no way to know someone was there unless that person chose to report it.

Now imagine the building starts charging $2/month for the privilege of turning off your camera. That’s Instagram Plus. The building never had real security. It had an honor system. And now it’s monetizing the opt-out.

That’s what Instagram does with the “seen” indicator. The server gives you the content and trusts your app to report back that you viewed it. Your app is the camera. Turn it off and you’re a ghost. There’s no server-side verification, no cryptographic binding, no enforcement of any kind. The “seen” list is a list of people who chose to announce themselves.

The fix: don’t serve the content in cleartext.

The simple version: imagine Instagram sends you a locked box instead of a photo. The photo is inside, but you can’t open it. When you tap “view,” your app asks Instagram for the key. Instagram hands you the key and writes your name on the viewer list at the same time. You open the box, you see the photo. If you never ask for the key, you never see the photo, and your name never appears. A browser extension can intercept the box, but without the key it’s useless. The key and the “seen” are the same action. You can’t have one without the other. That’s the entire fix.

Current flow (broken) - the client controls the “seen”:

sequenceDiagram

participant Client

participant CDN

participant Server

Client->>CDN: Pre-fetch story

CDN-->>Client: Full content in cleartext

Note over Client: Content already readable

Note over Client: User taps on story

Client->>Server: SeenMutation ("I saw it")

Note over Server: Trusts the client, logs the seen

Note over Client,Server: The client CHOSE to report.<br/>Nothing forced it.

rect rgb(60, 10, 10)

Note over Client: With extension:

Client-xServer: SeenMutation blocked

Note over Server: No report received.<br/>Name never appears.

Note over Client: Content still readable.<br/>Already had it.

endProposed flow (enforced) - the server controls the “seen”:

sequenceDiagram

participant Client

participant CDN

participant KeyServer

Client->>CDN: Pre-fetch story

Note over CDN: Serves static encrypted file.<br/>Same blob for everyone.<br/>No crypto work.

CDN-->>Client: Encrypted blob

Note over Client: File cached locally, but just noise.

Note over Client: User taps on story

Client->>KeyServer: Request decryption key

Note over KeyServer: Requesting the key IS the seen.<br/>No separate call. No opt-out.<br/>Returns 32 bytes.

KeyServer-->>Client: Decryption key

Note over Client: Decrypts locally, displays story

rect rgb(10, 40, 10)

Note over Client: With extension:

Client-xKeyServer: Key request blocked

Note over Client: Still has the encrypted blob.<br/>Still can't open it.<br/>No key = no content = no bypass.

endHere’s how it works technically, step by step:

Step 1 - Encrypt once at upload. The poster uploads their story normally. The server generates a unique AES-256-GCM content key for that story, encrypts the media, and pushes the encrypted blob to the CDN. One file per story, identical for everyone. The CDN does zero crypto work - it serves a static opaque file like it always has. The content key stays on a dedicated key server, tied to the story ID.

Why GCM and not ECB? Not all encryption is equal. There’s a wrong way to encrypt images that looks secure but isn’t. AES in ECB (Electronic Codebook) mode encrypts each small block of data independently. If two blocks of pixels are the same color, they produce the same encrypted output. The result: you can still see the shape of the image through the encryption. This is known as the “ECB penguin” problem - encrypt a photo of a penguin with ECB and you can still see the penguin. For Instagram stories, that would mean faces, text, and locations remain visible even after encryption. Useless as protection.

AES-256-GCM works differently. It mixes each block with a unique value derived from a counter, so identical pixels produce completely different encrypted output. The result is indistinguishable from random noise. No shapes, no patterns, nothing recognizable. GCM also verifies integrity: if anyone tampers with the encrypted file, the decryption detects it and fails. It’s the standard used by banks, governments, and every serious encryption implementation. It’s hardware-accelerated on every phone and laptop sold in the last decade. And it’s quantum-resistant: the best known quantum attack (Grover’s algorithm) halves the effective key length, reducing AES-256 to the equivalent of AES-128 - still far beyond anything breakable. The NIST recommends AES-256 as a building block for post-quantum cryptographic stacks. This fix wouldn’t just solve today’s problem. It would survive the next generation of computing.

Step 2 - Pre-fetch stays. The client still pre-fetches stories in advance from the CDN, exactly as today. The only difference: what it downloads is an encrypted blob. The CDN doesn’t know or care what’s inside. It serves a static file, same as before. Cache-efficient, fast, lightweight. AES-GCM output is the same size as the input (plus 28 bytes for the authentication tag and nonce), so there’s no bandwidth penalty.

Step 3 - Tap to decrypt. When the user taps on a story, the client sends a request to the key server: “give me the key for story X.” The key server does two things atomically: it records the seen receipt and it returns the decryption key. The client decrypts locally (hardware-accelerated, microseconds) and displays the story. The key server is a lightweight service: it receives a story ID, verifies auth, logs the seen, and returns 32 bytes. That’s it.

Step 4 - No key, no content. If a browser extension blocks the key request, the encrypted blob stays encrypted. You have a file on your machine that you can’t open. No key request means no seen receipt, but it also means no story. The content and the receipt are cryptographically bound. You can’t have one without the other.

What about latency? Instagram’s client pre-fetches 2-3 stories ahead with a target of under 200ms load time. With the encrypted scheme, the pre-fetch still happens - the encrypted blob is already in cache. The only new network call is the key request: a single lightweight round-trip, ~20-50ms on a typical connection. The swipe animation between stories takes about 300ms. Fire the key request at the start of the swipe, the key returns in 50ms, and the client has 250ms of margin to decrypt and render before the animation finishes. Zero perceived difference for the user. The pre-fetch target of 200ms is maintained because the bottleneck was always the download, not the decryption.

What about the preview on web? On mobile, the story tray shows profile picture circles with no content preview. Everything can be encrypted, no issue. On the web client, there’s one thing that changes: when you view someone’s stories, the browser currently pre-fetches and displays a small preview of the next user’s first story in the background. That preview has to go. It shows cleartext content before any seen receipt is sent. Without it, the web client would show the next user’s profile picture and name while the encrypted blob waits in cache. When you swipe, the key request fires, the content decrypts, and it appears. The preview was a UX convenience that directly undermined privacy. Removing it is the correct trade-off.

What about infrastructure cost? The CDN stays exactly as it is: one static file per story, served to everyone. No per-user encryption, no edge computing, no change to the content delivery pipeline. The only new component is the key server, which handles the same request volume as the current SeenMutation endpoint (every story view already triggers a server call today). The only difference is the response now includes 32 bytes of key data. Same load, same scale, trivially small response. The encryption happens once at upload time, not per-request. The CDN does zero extra work.

Could per-user encryption be even stronger? In theory, yes. Instead of encrypting once at upload, the server could encrypt the story with a unique key per viewer, so sharing your key with someone else would be useless - it only decrypts your copy. But this fundamentally changes how the CDN works. A CDN is a static file cache: one file, replicated worldwide, served to everyone. Per-user encryption means each request produces a different output. That’s compute, not cache. It would require edge computing (CDN nodes encrypting on-the-fly per viewer), which transforms the entire content delivery pipeline. Netflix actually does something similar at the license level: the content key is the same, but it’s wrapped in a per-device license before delivery. An attacker who extracts the raw content key can still share it - this is exactly what Widevine L3 decryptors do. For Instagram stories that expire in 24 hours, the security gain of per-user encryption over the single-key scheme is negligible. Nobody is going to distribute AES content keys in real-time for ephemeral content. The single-key approach with a gated key server is the practical solution.

Is this a novel idea? No. This is exactly how DRM works, and it’s been broken and survived. Netflix encrypts content using CENC (Common Encryption, ISO/IEC 23001-7) with AES-128-CTR at the sample level. The encrypted segments sit on CDN, static, same for everyone. Playback is gated behind a Widevine license server that delivers the content key wrapped in a per-device license. In 2019, security researcher David Buchanan cracked Widevine L3 using differential fault analysis on the whitebox AES-128 implementation, fully removing DRM from Netflix content. Netflix’s response? They limited L3 devices to sub-720p quality and kept going. The DRM is broken at the software level and Netflix still makes billions. The model doesn’t need to be unbreakable. It needs to be good enough. For Instagram stories that expire in 24 hours, the bar is even lower than feature films. My proposal uses AES-256-GCM rather than AES-128-CTR: stronger encryption, plus built-in authentication that detects tampering. Meta built end-to-end encryption for WhatsApp. Instagram operates its own CDN (fbcdn.net, Facebook’s IP block, not a commercial CDN), meaning they control the edge nodes and the entire delivery pipeline. They have the engineering talent, the infrastructure, and the institutional knowledge to build this. They chose not to.

The pre-fetch was a UX decision. Not encrypting the pre-fetched content was a business decision. And now they sell the consequence of that business decision for $2/month. They profit from the flaw and they profit from selling you the fix. Same architecture, two revenue streams.

In both cases: every user who relied on the “seen” indicator was trusting a system with zero enforcement. It works only if every viewer uses the unmodified official client. One browser extension and it’s gone.

ELI5: Instagram sends you a locked box (the story). Right now, the box isn’t actually locked - you get the photo directly and your phone tells Instagram “I looked at it.” My fix: actually lock the box. When you want to see the story, you ask Instagram for the key. Instagram gives you the key AND writes your name on the viewer list at the same time. No key = no story and no “seen.” You can’t cheat because you can’t see the photo without asking for the key. Instagram already has the technology to do this (they built it for WhatsApp). They just chose not to.

A GDPR question

Instagram operates in the EU under GDPR.

Article 25(2) states that controllers must implement measures ensuring that “by default personal data are not made accessible without the individual’s intervention to an indefinite number of natural persons.” The “seen” indicator is exactly that mechanism: it lets users know who accessed their story. But it has zero server-side enforcement. Anyone with a browser extension can access stories without the poster ever knowing.

Article 32(1)(d) requires “a process for regularly testing, assessing and evaluating the effectiveness of technical and organisational measures for ensuring the security of the processing.” If Meta had tested the effectiveness of the “seen” indicator at any point in the last seven years, they would have found that it can be bypassed with one line of JavaScript. Either they never tested it, or they tested it and chose to monetize the bypass instead of fixing it.

To put the technical complexity in perspective: the level of skill required to bypass the “seen” indicator is so low that it could be an introductory exercise in any web security course. Intercepting a GraphQL call with a browser API that has been documented for over a decade is not a sophisticated attack. It’s not even an attack. It’s a browser feature working as intended. On platforms like Hack The Box or TryHackMe, this would be rated easy. A platform used by 2 billion people has a core privacy feature that a first-year cybersecurity student could bypass in an afternoon.

Even Proton, a Swiss privacy company, called it out publicly: “For stalkers, disgruntled exes, harassers, or obsessive viewers… this feature removes that friction” and “Meta seems willing to make posters’ loss of privacy a paid feature for viewers.” Meanwhile, Instagram is also dropping end-to-end encryption on DMs. Remove DM privacy, sell story privacy. Both at the same time.

This isn’t theoretical. According to the Office for Victims of Crime, 6.6 million adults are stalked annually in the United States. 76% of women murdered by a current or former intimate partner were stalked first. The story viewer list, broken as it is, was one of the few soft accountability mechanisms available to victims: seeing a blocked ex show up on an alt account, noticing someone viewing every story within seconds. Instagram Plus removes that accountability for $2/month. The extension I built removes it for free. The difference is that I’m demonstrating a flaw. Meta is selling it.

For context, Meta has already been fined €1.2 billion for GDPR violations related to data transfers, €390 million for forced consent on behavioral advertising, and €405 million for violating children’s privacy on Instagram. The DPC has shown it’s willing to act on Instagram-specific issues. The “seen” indicator bypass is arguably simpler to demonstrate and more directly harmful than any of those cases: it’s a privacy feature that 2 billion people rely on, it has zero enforcement, and Meta is now monetizing the bypass instead of fixing it.

I’m not a lawyer. But this feels like a question that the EDPB and national data protection authorities should be asking.

ELI5: European law says Instagram must protect your data and make sure people can’t access it without your knowledge. The “seen” feature is supposed to tell you who looked at your story. But anyone can bypass it with a free browser extension. That probably breaks the law. Meta has already been fined billions for other privacy violations. This one is arguably worse because 2 billion people rely on it and it has never actually worked.

Why Mexico, Japan, and the Philippines

Instagram Plus is being tested in Mexico, Japan, and the Philippines. Not Germany. Not France. Not Ireland, where Meta’s EU headquarters are and where the DPC has jurisdiction.

That’s not a random product rollout. That’s a legal strategy.

If Meta launched anonymous story viewing in the EU first, it would be an implicit admission: “the seen indicator was never enforced, and now we’re selling the bypass.” Any EU data protection authority could use that as grounds for a GDPR complaint under Articles 25 and 32. Meta would be admitting the flaw exists and monetizing it instead of fixing it.

By testing in countries with weaker privacy regulations, Meta avoids setting that legal precedent. If it works commercially in those markets, they can refine the product, build the revenue case, and figure out how to frame it for EU regulators later.

The question is: does testing it outside the EU change the fact that the flaw exists inside the EU right now? Every EU user’s “seen” indicator is just as broken as every Mexican user’s. The only difference is that in Mexico, Meta is honest about it (for $2/month). In the EU, they pretend it works.

ELI5: Meta launched their paid anonymous viewing in Mexico, Japan, and the Philippines - not in Europe where privacy laws are strict. If they launched it in Europe first, they’d basically be admitting “our seen feature was never real and now we’re selling the bypass.” That’s a legal problem. So they test it where nobody can sue them, and bring it to Europe later.

The ghost that likes

Here’s a fun one. With the extension active, you can like someone’s story without appearing in their viewer list. They get the notification “X liked your story” but when they check who viewed it, your name isn’t there. You’re a ghost that leaves likes.

The like and the seen are two completely independent systems. The extension blocks the SeenMutation but not the like call. Instagram never bothered to link the two. You can interact with a story without the platform registering that you viewed it.

ELI5: You can like someone’s story without showing up as a viewer. They get a notification that you liked it, but when they check who viewed the story, your name isn’t there. You’re a ghost that leaves fingerprints. Instagram never connected the “like” system to the “seen” system.

A note on intelligence use

The asymmetric visibility isn’t just a privacy concern. It’s an intelligence tool. Anyone doing surveillance on Instagram gets: invisible story viewing, full viewer lists on their own decoy stories, media downloads of deleted content, and timestamped logs. All from a browser extension. No zero-day. No exploit. Just the API working as designed.

ELI5: This isn’t just a privacy toy. It’s a surveillance kit. Someone could watch all your stories without you knowing, see who views their stories, save everything you post (even after you delete it), and keep a log with dates and times. All of this works with a free browser extension, no hacking required. Intelligence agencies and stalkers have the same tools. The only difference is intent.

Beyond stories: DMs, presence, and the same problem everywhere

The same architectural pattern likely applies across Instagram. On the web client, Instagram doesn’t even offer the “disable read receipts” toggle that the mobile app has. The content loads first, the “seen” is reported separately, just like stories.

On mobile, the read receipt toggle is mutual: turn it off and you lose the ability to see when others have read your messages. The architecture I documented on stories breaks that symmetry. Block the receipt on the web, keep seeing theirs. Same asymmetric visibility.

This isn’t a secret either. People have been doing the airplane mode trick for years: turn off your connection, open the story or DM, read it, force-close before reconnecting. The content was already cached locally, the “seen” never fires. The extension just automates what everyone already does manually.

The same logic likely applies to the “online” status. Your presence is a heartbeat your client sends. Block it and you’re invisible, but you still receive everyone else’s. Same asymmetry, same architecture. And this one is harder to fix: Instagram already offers an official “hide activity status” toggle, meaning they’ve already separated the data connection from the presence signal. They can’t fuse them back without breaking their own feature.

Instagram could fix all of this with server-side confirmation round-trips. Don’t serve the content until the receipt is acknowledged. But that would break the caching model that makes stories and messages feel instant. Performance over privacy enforcement. That’s the trade-off they made.

Update (April 20): I tested DMs and typing indicators. Every hypothesis from this section is now confirmed.

DM read receipts

Instagram uses a two-step process for DM read receipts. When you open a conversation, two separate mutations fire:

1. useIGDMarkThreadAsReadMutation → marks the thread as read (and reports to server)

2. useIGDMarkThreadAsReadValidationMutation → async confirmation via SharedWorkerThe naming is misleading. I initially assumed the first mutation was local-only and the second was the server notification. Testing proved the opposite. Blocking only the Validation mutation (the second one) did nothing - the “Seen” indicator still appeared on the sender’s side. Blocking the first mutation is what actually prevents the read receipt from reaching the server. Both mutations must be blocked for complete invisibility.

The second mutation is sent through Instagram’s SharedWorker (IGDAWMainWebWorkerBundle), a separate JavaScript execution context that runs independently from the page. It has its own fetch() function that can’t be monkey-patched from the page context. Standard browser extension APIs (webRequest) don’t reliably intercept requests originating from SharedWorkers. The only way to block it is to intercept the worker’s script as it loads and inject a fetch patch directly into the worker code before it executes - which is what the extension does using Firefox’s filterResponseData API on the worker’s initialization script at /static_resources/webworker/init_script/.

// Intercept SharedWorker script and prepend fetch patch

browser.webRequest.onBeforeRequest.addListener((details) => {

const filter = browser.webRequest.filterResponseData(details.requestId);

const chunks = [];

filter.ondata = (event) => { chunks.push(new Uint8Array(event.data)); };

filter.onstop = () => {

const patchCode = `

var _origFetch = self.fetch;

self.fetch = function(input, init) {

var fn = "";

try {

if (init?.headers?.get) fn = init.headers.get("X-FB-Friendly-Name") || "";

else if (init?.headers) fn = init.headers["X-FB-Friendly-Name"] || "";

} catch(e) {}

if (fn === "useIGDMarkThreadAsReadValidationMutation")

return Promise.resolve(new Response('{"data":{}}', {status: 200}));

return _origFetch.apply(this, arguments);

};

`;

filter.write(new TextEncoder().encode(patchCode + mergeChunks(chunks)));

filter.close();

};

}, { urls: ["https://www.instagram.com/static_resources/webworker/*"] }, ["blocking"]);On mobile, Instagram offers a “disable read receipts” toggle but it’s mutual: turn it off and you lose the ability to see when others have read your messages. The web architecture breaks that symmetry completely. Block both mutations, keep receiving everyone else’s receipts. You see who read your messages. Nobody sees that you read theirs.

The mobile toggle is theater. I tested this with two alt accounts: one with read receipts disabled on mobile, the other checking from the web client. When the account with “read receipts off” opens a conversation on the mobile app, the web client on the other account still shows the “Seen” indicator. The mobile toggle only controls what the mobile app reports. The server still tracks the read status internally and the web client still displays it to the receiving account. The privacy setting is enforced on the sender’s device only - not across clients. An account with disabled read receipts thinks it’s invisible. The account on the other end sees everything from a browser. This is the same client-side trust problem as stories: the privacy feature is a UI preference on one client, not a server-enforced guarantee across all clients.

The mobile app doesn’t offer read receipt control on the web client at all. There’s no toggle, no setting, nothing. The web version always sends read receipts. Unless you block it yourself.

Typing indicators

This one goes deeper. The typing indicator (“User is typing…”) doesn’t use GraphQL at all. It runs over Instagram’s MQTT WebSocket connection at wss://gateway.instagram.com/ws/mqttbypass. MQTT is a lightweight messaging protocol designed for real-time communication - Instagram uses it for presence, typing, and message delivery notifications.

When you start typing in a DM, the client publishes a message to the MQTT broker. The broker pushes it to the recipient’s client, which shows “typing…” in the conversation. When you stop typing, another message clears the indicator.

Since this runs through a WebSocket in the browser, you can intercept it by monkey-patching WebSocket.prototype.send before Instagram’s code loads:

const originalSend = WebSocket.prototype.send;

WebSocket.prototype.send = function(data) {

if (this.url?.includes("gateway.instagram.com")) {

const text = new TextDecoder("utf-8", { fatal: false })

.decode(data instanceof ArrayBuffer ? new Uint8Array(data) : data);

if (text.includes("typing_activity")) return; // drop

}

return originalSend.apply(this, arguments);

};The MQTT frames are binary, but the topic names and payload markers are readable as UTF-8 strings within the binary data. Filtering on typing_activity catches the indicator messages. The frame is dropped before it leaves your browser. The recipient never sees “typing…”.

This is particularly interesting because MQTT is not HTTP. It’s a persistent bidirectional connection. But the same principle applies: the client decides what to send. The server trusts whatever the client publishes. There’s no server-side check that verifies you’re actually idle when you stop sending typing events. You could be writing a novel and the other person would see nothing.

The full picture

| Signal | Mechanism | Mutation/Channel | Blockable |

|---|---|---|---|

| Story seen | GraphQL POST | PolarisStoriesV3SeenMutation | Yes |

| DM read | GraphQL POST | useIGDMarkThreadAsReadValidationMutation | Yes |

| Typing indicator | MQTT WebSocket | typing_activity on gateway.instagram.com | Yes |

| Online presence | MQTT WebSocket | Activity heartbeat | Likely yes |

Stories, DMs, read receipts, typing indicators. Every single “privacy signal” on Instagram is client-side trust with zero server-side enforcement. The extension now blocks all four. The only signal I haven’t tested yet is presence (the green dot), but given that it uses the same MQTT channel as typing, there’s no reason to believe it’s any different.

ELI5: It’s not just stories. DM read receipts (“Seen” under messages), the “typing…” bubble, and the green “Online” dot all work the same way: your phone tells Instagram what you’re doing, and Instagram trusts it. Block any of those reports and you’re invisible. You can read someone’s DMs without them knowing, type a message without the “typing…” showing up, and be online without the green dot appearing. Every privacy signal on Instagram is based on trust, not enforcement.

The WhatsApp comparison is damning. WhatsApp, also owned by Meta, has the same client-side read receipts (the blue ticks). But WhatsApp does one thing Instagram doesn’t: mutuality. Turn off your read receipts on WhatsApp and you lose the ability to see when others read your messages. It’s symmetric. Instagram on the web doesn’t even offer that toggle. And the extension breaks what symmetry exists on mobile: you block your receipts, you keep seeing everyone else’s. Meta built mutuality into WhatsApp and chose not to build it into Instagram. Same company, same engineering team, different choices. The fact that they know how to enforce fairness (WhatsApp) and chose not to (Instagram) is the pattern.

The “as is” escape hatch

Here’s the part nobody reads. Instagram’s Terms of Use, Section 7.3, state: “We will use reasonable skill and care in providing our Service to you and in keeping a safe, secure, and error-free environment, but we cannot guarantee that our Service will always function without disruptions, delays, or imperfections.” That last word is doing a lot of heavy lifting. An “imperfection” is a broken layout or a slow feed. A privacy feature that has never been enforced since launch and that anyone can bypass with one line of JavaScript is not an imperfection. It’s an architectural choice.

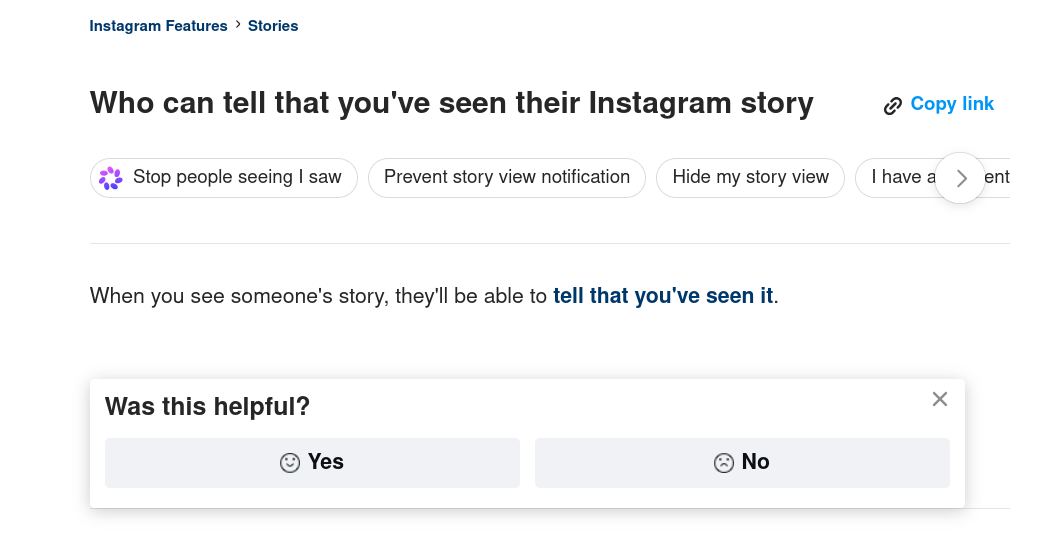

But Instagram’s own help page tells users: “When you see someone’s story, they’ll be able to tell that you’ve seen it.”

Not “might.” Not “in most cases.” “Will.” That’s a direct, unqualified promise to every user. The Terms of Use say “we cannot guarantee.” The help page says “they will be able to tell.” The code says neither is true - the “seen” is a voluntary client-side call that anyone can block. Three layers: the help page is the marketing, the ToS are the legal shield, and the code is the truth.

Section 4.2 of the same Terms includes: “You can’t do, or attempt to do, anything to circumvent, by-pass, or override any technological measures that control or limit access to the Service or data.” This could theoretically apply to blocking the seen mutation. But the “seen” is not a technological measure that controls or limits access. It’s a reporting signal that your browser sends voluntarily. Not sending a report is not circumvention - it’s absence. The extension doesn’t access anything it shouldn’t, doesn’t bypass any authentication, doesn’t forge any data. It simply tells the browser not to make a call. The same way an ad blocker tells the browser not to load a tracking pixel. uBlock Origin has been doing this for a decade and nobody has argued it circumvents technological measures. They never claim the viewer list shows everyone who viewed your story. They never guarantee that read receipts are reliable. The feature exists in the UI, 2 billion people rely on it daily, but legally Meta has zero obligation to make it function correctly. The “as is” disclaimer is their escape hatch.

Nobody reads terms of service. You don’t open Instagram thinking “let me review the warranty disclaimers before checking who viewed my story.” You open the app, see a name in the viewer list, and assume it means something. Meta knows the feature is bypassable. They’ve known since 2019. They protected themselves legally by guaranteeing nothing in the fine print, marketed the feature as if it works, and now sell the bypass as a premium upgrade. They never promised it worked. They just let you believe it did.

But here’s the thing: the ToS doesn’t override the GDPR. Article 5(1)(a) establishes that personal data must be processed “lawfully, fairly and in a transparent manner.” The ICO’s guidance is explicit: “You must not process data in a way that is unduly detrimental, unexpected or misleading to the individuals concerned” and “it’s not enough to show your processing is lawful if it is fundamentally unfair to or hidden from the individuals concerned.” Article 12 requires that information about data processing be “concise, transparent, intelligible and easily accessible, in clear and plain language.” Articles 13 and 14 establish the right to be informed - accurately - about how your data is processed.

Instagram’s help page tells users “they’ll be able to tell that you’ve seen it.” That statement is false. The seen indicator has never been enforced. Telling users a privacy feature works when it doesn’t is misleading under Article 5(1)(a). You can’t disclaim your way out of a legal obligation with an “as is” clause buried in terms nobody reads. The GDPR fairness principle exists precisely to prevent this: a company cannot tell users one thing on a help page, contradict it in the ToS, and claim the ToS wins. The help page is what users see. The ToS is what lawyers see. The GDPR says the user’s understanding matters.

ELI5: European privacy law (GDPR) says you can’t mislead users about how their data is processed. Instagram’s help page says “they’ll be able to tell that you’ve seen their story.” That’s false and has been false since 2019. Meta’s Terms of Service say “we don’t guarantee anything works” - but the GDPR doesn’t care about your terms. You can’t tell users a privacy feature works, know that it doesn’t, and hide behind fine print. The law says what matters is what users understand, not what lawyers wrote.

Why I’m publishing this

I’m an Instagram user, but I wasn’t following tech news when Instagram Plus was announced. My friends told me about it. They were the ones panicking. People who check the viewer list every day to see if an ex is still watching. People who use the “seen” as a safety signal to know if someone they blocked on their main is stalking them from another account. People who felt safe because they could see who was looking, and who suddenly realized that safety was about to be sold for $2/month.

These aren’t hypothetical users. These are people I know. People I talk to every day. The fear was real. I don’t usually have my head in this kind of thing, but between what they told me and something I’d noticed earlier, something clicked. I’d seen a deleted story still visible in my app. The cache hadn’t cleared. And I thought: if I can still see a story that’s been deleted, did the poster ever know I saw it? If the content outlives the deletion, what else is the client handling on its own? Was the “seen” ever real to begin with? I just wanted to see what was under the hood. It wasn’t. The “seen” was never enforced. It was never secure. It was just a call that the official client happened to make. And it’s been bypassable for free since 2019.

I built the extension to see how far it goes. The prior art was broken (old REST endpoints that Instagram migrated away from). I wanted a working proof of concept with the real GraphQL endpoints, the real metadata, the real downloads. To document how deep the flaw goes.

A privacy feature that 2 billion people trust was never real. And now Meta wants to sell the proof.

What you can do about it

Nothing, really. If you post stories, anyone with a browser extension can view them without appearing in your viewer list. This has been true since 2019. There is no setting you can toggle, no privacy option you can enable, no way to force viewers to send the seen receipt. The “seen” list was never a complete list. It was a list of people who didn’t block the call.

If the viewer list matters to you for safety reasons, don’t rely on it. It was never reliable. If someone isn’t in your viewer list, it doesn’t mean they didn’t see your story. It means their client chose to tell you. That’s all it ever meant.

Instagram does offer tools for this. The “hide story from” feature blocks specific people from seeing your stories, and the “restrict” feature hides your read receipts from someone. Both are server-side. Both actually work. No extension can bypass them. But they only work if you know who to target. If someone is viewing your stories anonymously, you don’t know they’re there, so you can’t hide from them. And the restrict feature is worth noting: it proves Instagram knows how to hide read receipts server-side when they want to. They just chose not to do it for the seen indicator by default.

The closest thing to a real fix is on your side: set your account to private and regularly clean your followers list. Remove inactive accounts, people you don’t recognize, accounts that don’t feel right. A private account means only approved followers can see your stories. That’s actual access control, enforced server-side. The server won’t serve your story to someone who isn’t on your followers list. No browser extension can bypass that. No cancel: true can get around a server that refuses to send the content in the first place. That’s the difference between a privacy feature that works and one that doesn’t: server-side enforcement vs. client-side trust.

If your account is public, assume everything you post is seen by everyone. Don’t post anything you wouldn’t want a stranger to screenshot, save, and keep forever. That was always the reality of public social media, but the “seen” indicator gave people the illusion of control. It made you think you knew who was watching. You didn’t. You never did.

ELI5: The only real protection is a private account with a clean followers list. When your account is private, Instagram’s server refuses to send your story to non-followers. No extension can bypass that. Everything else - the “seen” list, the viewer count - is just a list of people who chose to announce themselves. If someone isn’t on the list, it doesn’t mean they didn’t look. It means they didn’t tell you.

None of this excuses Meta. A privacy indicator that doesn’t work is worse than no indicator at all. The “seen” creates a mental model that is wrong on every level. The poster believes: I know who saw my story, the content disappears after 24 hours, and my close friends list controls who can access it. The reality: the seen is bypassable, the content persists on the CDN for days after “expiry,” the CDN URL is a bearer token that anyone can open without an Instagram account, and the close friends restriction only gates who receives the URL, not who can access the file. The seen indicator doesn’t just fail to protect privacy. It actively encourages people to share content they wouldn’t share if they understood how the system actually works. That’s worse than no indicator. No indicator at least forces caution. A broken indicator that looks functional creates a false sense of security that leads to real harm. People made real decisions based on the viewer list: who to block, who to trust, whether they were being watched. Those decisions were based on incomplete data, and Meta knew it.

Source code and technical details: instagram-story-research